Bring Your Own LLM

Holistics lets you connect your own OpenAI, Anthropic, or Gemini account — you control cost, usage, and which models power your AI workflows.

You are responsible for configuring your own data and security controls with your provider. For reference, check out Holistics' data controls for default AI providers.

Supported AI providers

- OpenAI

- Anthropic

- Gemini

- OpenAI-compatible endpoints (e.g. OpenRouter, LiteLLM)

Model classes

When you use your own provider, you can assign a model to each class in Holistics:

- Agentic chat model: Used for conversational, multi-step workflows such as querying data, modeling, and building dashboards. We recommend choosing a model with strong reasoning to prioritize accuracy.

- Quick generation model: Used for fast, one-shot tasks such as generating field descriptions and commit messages. We recommend choosing a fast, low-cost model to prioritize speed.

You can mix models from different providers — for example, Claude Sonnet 4.6 for agentic chat and Gemini 3.1 Flash Lite for quick generation.

Connect your own AI provider

Obtain your API key

- OpenAI API keys page

- Claude API keys page

- Gemini API key guide

- OpenAI-compatible endpoints: refer to your proxy's documentation

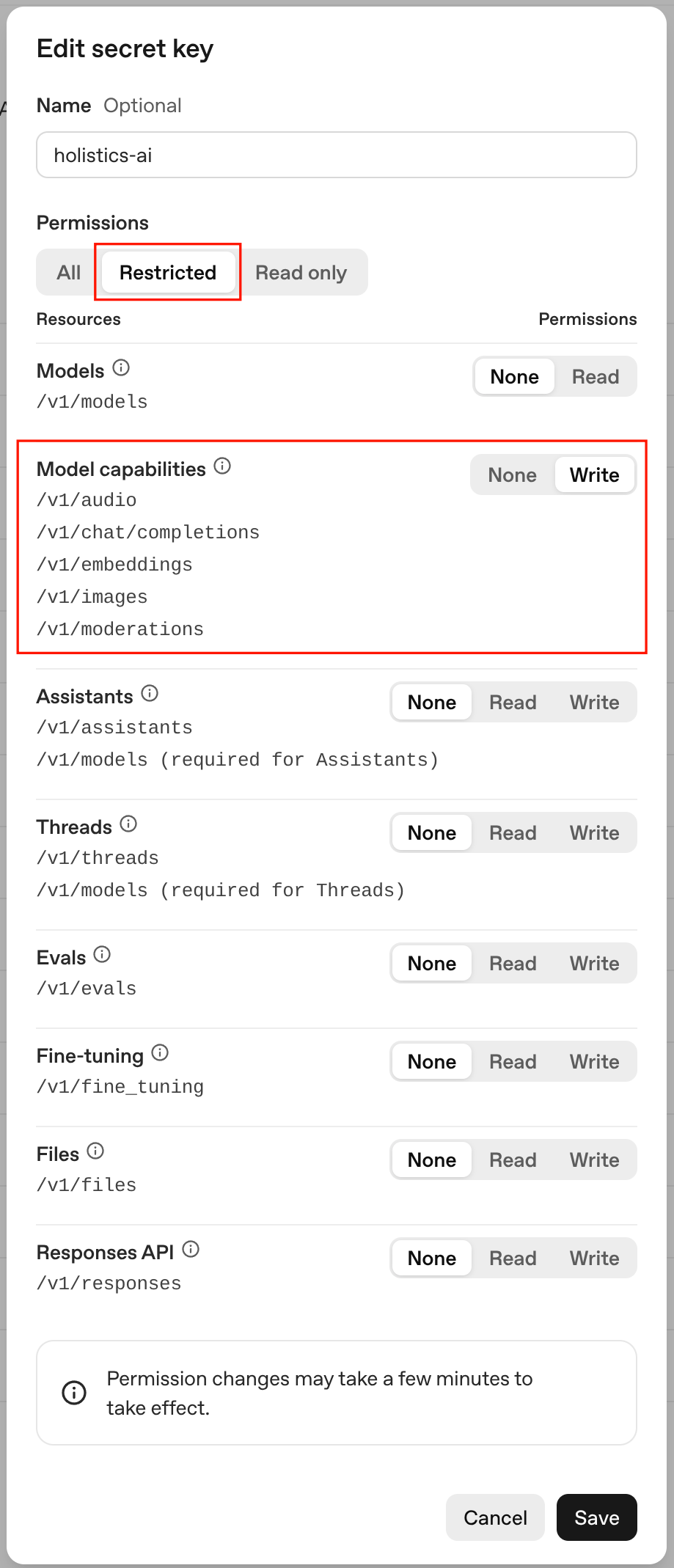

If you're using an OpenAI key: enable the Write permission on Model capabilities.

Configure your AI providers and models

In Holistics, go to Organization settings > AI settings > AI providers, enter your key, then choose your preferred models.

For OpenAI-compatible endpoints, you'll also need to provide the base URL (e.g. https://openrouter.ai/api/v1).